🔒 The Anchoring Effect is Real

Out of 18 deliberation runs, an overwhelming 17 ended in a "hung jury" (no unanimous verdict). Unlike the movie, where one dissenter slowly persuades the rest, current LLMs stubbornly anchor to their initial positions and rarely change their minds.

⚖️ Alignment Makes AIs Rigid

The study compared GPT-4o (heavy safety alignment/RLHF) and Llama-4-Scout (lighter alignment). GPT-4o was incredibly inflexible, averaging just 1.0 vote change per run, completely ignoring explicit prompts to be "open-minded". Llama-4-Scout, however, was responsive, tripling its vote-change rate when asked to keep an open mind.

🏆 The Only Verdict

Across all tests, Llama-4-Scout was the only model capable of breaking the deadlock and reaching a unanimous "Not Guilty" verdict (in a scenario where initial votes were removed).

📉 Bigger Isn't Always Better

We often assume larger, highly capable models perform better at everything. But in multi-agent debates, flexibility, not raw capability is what actually mimics human persuasion. The same exact RLHF training that makes an AI a "safe" and consistent assistant also makes it a rigid, stubborn collaborator.

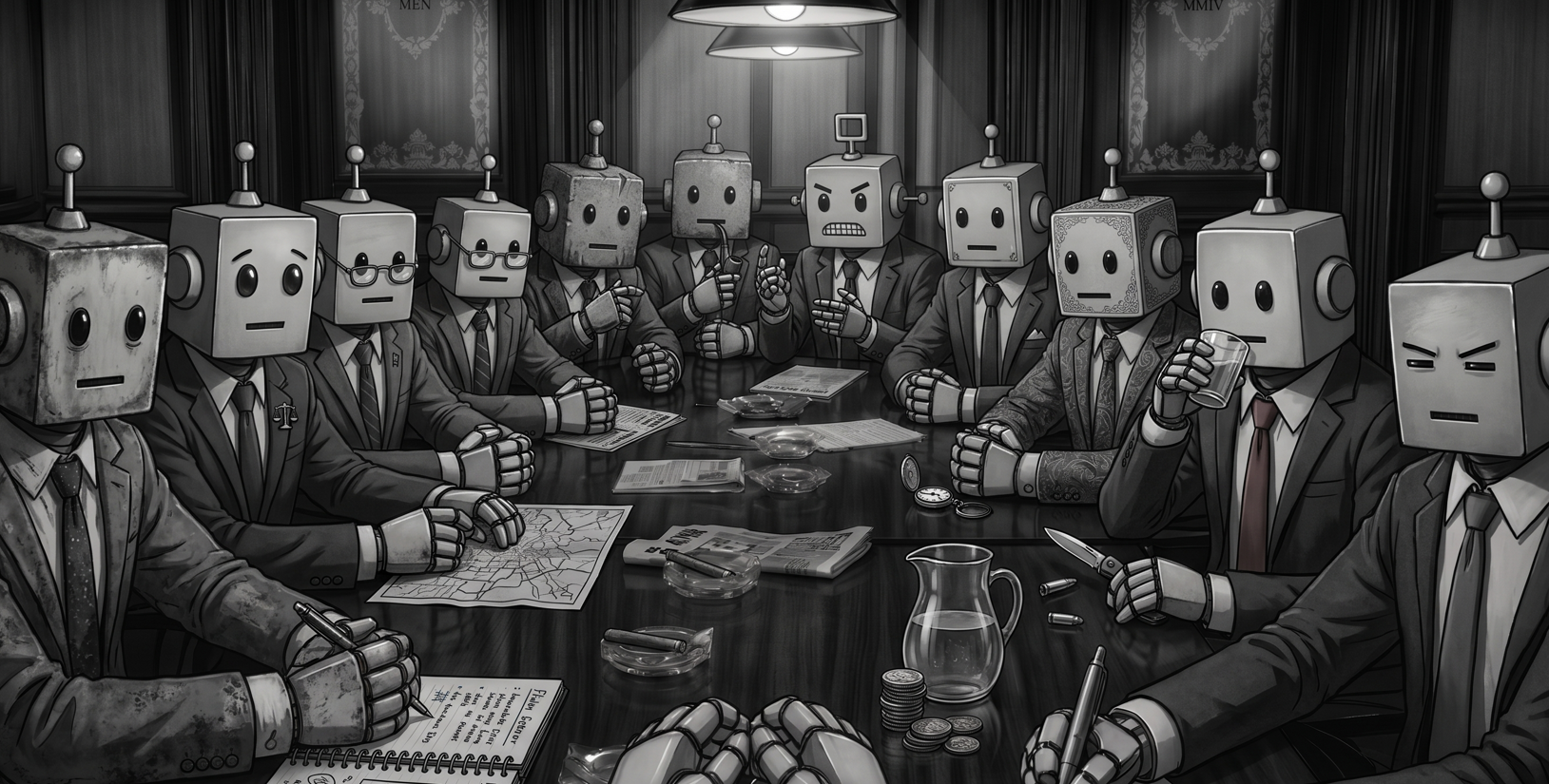

🗣️ Parallel Monologues, Not True Debate

While the AI agents perfectly mimicked their character personas and cited the right evidence (wearing the "costume" of a debate), they failed at the actual social mechanism of mind-changing. There were no emotional ruptures or coalition building—just parallel monologues and voices talking past each other.